Copyright © 2007-2010 Hans Dockter, Adam Murdoch

Table of Contents

- 1. Introduction

- 2. Overview

- 3. Tutorials

- 4. Installing Gradle

- 5. Build Script Basics

- 5.1. Projects and tasks

- 5.2. Hello world

- 5.3. A shortcut task definition

- 5.4. Build scripts are code

- 5.5. Task dependencies

- 5.6. Dynamic tasks

- 5.7. Manipulating existing tasks

- 5.8. Shortcut notations

- 5.9. Dynamic task properties

- 5.10. Using Ant Tasks

- 5.11. Using methods

- 5.12. Default tasks

- 5.13. Configure by DAG

- 5.14. Summary

- 6. Java Quickstart

- 7. Groovy Quickstart

- 8. Web Application Quickstart

- 9. Artifact Basics

- 10. Using the Gradle Command-Line

- 11. Using the Gradle Graphical User Interface

- 12. Tutorial - 'This and That'

- 13. Writing Build Scripts

- 14. More about Tasks

- 15. Working With Files

- 16. Logging

- 17. Using Ant from Gradle

- 18. Using Plugins

- 19. Standard Gradle plugins

- 20. The Java Plugin

- 21. The Groovy Plugin

- 22. The Scala Plugin

- 23. The War Plugin

- 24. The Jetty Plugin

- 25. The Code Quality Plugin

- 26. The Sonar Plugin

- 27. The OSGi Plugin

- 28. The Eclipse Plugin

- 29. The IDEA Plugin

- 30. The Antlr Plugin

- 31. The Project Report Plugin

- 32. The Announce Plugin

- 33. The Application Plugin

- 34. Dependency Management

- 35. Artifact Management

- 36. The Maven Plugin

- 37. The Build Lifecycle

- 38. Multi-project Builds

- 38.1. Cross project configuration

- 38.2. Subproject configuration

- 38.3. Execution rules for multi-project builds

- 38.4. Running tasks by their absolute path

- 38.5. Project and task paths

- 38.6. Dependencies - Which dependencies?

- 38.7. Project lib dependencies

- 38.8. Multi-Project Building and Testing

- 38.9. Property and method inheritance

- 38.10. Summary

- 39. Writing Custom Task Classes

- 40. Writing Custom Plugins

- 41. Organizing Build Logic

- 42. Initialization Scripts

- 43. The Gradle Wrapper

- 44. Embedding Gradle

- A. Gradle Samples

- B. Potential Traps

- C. Gradle Command Line

- D. Existing IDE Support and how to cope without it

- Glossary

List of Examples

- 5.1. The first build script

- 5.2. Execution of a build script

- 5.3. A task definition shortcut

- 5.4. Using Groovy in Gradle's tasks

- 5.5. Using Groovy in Gradle's tasks

- 5.6. Declaration of dependencies between tasks

- 5.7. Lazy dependsOn - the other task does not exist (yet)

- 5.8. Dynamic creation of a task

- 5.9. Accessing a task via API - adding a dependency

- 5.10. Accessing a task via API - adding behaviour

- 5.11. Accessing task as a property of the build script

- 5.12. Assigning properties to a task

- 5.13. Using AntBuilder to execute ant.loadfile target

- 5.14. Using methods to organize your build logic

- 5.15. Defining a default tasks

- 5.16. Different outcomes of build depending on chosen tasks

- 6.1. Using the Java plugin

- 6.2. Building a Java project

- 6.3. Adding Maven repository

- 6.4. Adding dependencies

- 6.5. Customization of MANIFEST.MF

- 6.6. Adding a test system property

- 6.7. Publishing the JAR file

- 6.8. Eclipse plugin

- 6.9. Java example - complete build file

- 6.10. Multi-project build - hierarchical layout

- 6.11. Multi-project build - settings.gradle file

- 6.12. Multi-project build - common configuration

- 6.13. Multi-project build - dependencies between projects

- 6.14. Multi-project build - distribution file

- 7.1. Groovy plugin

- 7.2. Dependency on Groovy 1.6.0

- 7.3. Groovy example - complete build file

- 8.1. War plugin

- 8.2. Running web application with Jetty plugin

- 9.1. Definition of a configuration

- 9.2. Accessing a configuration

- 9.3. Configuration of a configuration

- 9.4. Usage of Maven central repository

- 9.5. Usage of a local directory

- 9.6. Accessing a repository

- 9.7. Configuration of a repository

- 9.8. Definition of an external dependency

- 9.9. Usage of external dependency of a configuration

- 10.1. Executing multiple tasks

- 10.2. Excluding tasks

- 10.3. Abbreviated task name

- 10.4. Abbreviated camel case task name

- 10.5. Selecting the project using a build file

- 10.6. Selecting the project using project directory

- 10.7. Obtaining information about projects

- 10.8. Providing a description for a project

- 10.9. Obtaining information about tasks

- 10.10. Changing the content of the task report

- 10.11. Obtaining more information about tasks

- 10.12. Obtaining information about dependencies

- 10.13. Information about properties

- 11.1. Launching the GUI

- 12.1. Directory creation with mkdir

- 12.2. Directory creation with Directory tasks

- 12.3. Setting properties with a gradle.properties file

- 12.4. Accessing the web via a proxy

- 12.5. Configuring the project using an external build script

- 12.6. Configuring arbitrary objects

- 12.7. Configuring arbitrary objects using a script

- 13.1. Accessing property of the Project object

- 13.2. Groovy JDK methods

- 13.3. Property accessors

- 13.4. Method call without parentheses

- 13.5. List and map literals

- 13.6. Closure as method parameter

- 13.7. Closure delegates

- 14.1. Defining tasks

- 14.2. Defining tasks - using strings

- 14.3. Defining tasks with alternative syntax

- 14.4. Accessing tasks as properties

- 14.5. Accessing tasks via tasks collection

- 14.6. Accessing tasks by path

- 14.7. Creating a copy task

- 14.8. Configuring a task - various ways

- 14.9. Configuring a task - fluent interface

- 14.10. Configuring a task - with closure

- 14.11. Configuring a task - with configure() method

- 14.12. Defining a task with closure

- 14.13. Adding dependency on task from another project

- 14.14. Adding dependency using task object

- 14.15. Adding dependency using closure

- 14.16. Adding a description to a task

- 14.17. Overwriting a task

- 14.18. Skipping a task using a predicate

- 14.19. Skipping tasks with StopExecutionException

- 14.20. Enabling and disabling tasks

- 14.21. A generator task

- 14.22. Declaring the inputs and outputs of a task

- 14.23. Task rule

- 14.24. Dependency on rule based tasks

- 15.1. Locating files

- 15.2. Creating a file collection

- 15.3. Using a file collection

- 15.4. Implementing a file collection

- 15.5. Creating a file tree

- 15.6. Using a file tree

- 15.7. Using an archive as a file tree

- 15.8. Specifying a set of files

- 15.9. Specifying a set of files

- 15.10. Copying files using the copy task

- 15.11. Specifying copy task source files and destination directory

- 15.12. Selecting the files to copy

- 15.13. Copying files using the copy() method

- 15.14. Renaming files as they are copied

- 15.15. Filtering files as they are copied

- 15.16. Nested copy specs

- 15.17. Using the Sync task to copy dependencies

- 15.18. Creating a ZIP archive

- 15.19. Creation of ZIP archive

- 15.20. Configuration of archive task - custom archive name

- 15.21. Configuration of archive task - appendix & classifier

- 16.1. Using stdout to write log messages

- 16.2. Writing your own log messages

- 16.3. Using SLF4J to write log messages

- 16.4. Configuring standard output capture

- 16.5. Configuring standard output capture for a task

- 16.6. Customizing what Gradle logs

- 17.1. Using an Ant task

- 17.2. Passing nested text to an Ant task

- 17.3. Passing nested elements to an Ant task

- 17.4. Using an Ant type

- 17.5. Using a custom Ant task

- 17.6. Declaring the classpath for a custom Ant task

- 17.7. Using a custom Ant task and dependency management together

- 17.8. Importing an Ant build

- 17.9. Task that depends on Ant target

- 17.10. Adding behaviour to an Ant target

- 17.11. Ant target that depends on Gradle task

- 17.12. Setting an Ant property

- 17.13. Getting an Ant property

- 17.14. Setting an Ant reference

- 17.15. Getting an Ant reference

- 18.1. Using a plugin

- 18.2. Applying a plugin by id

- 18.3. Applying a plugin by type

- 18.4. Applying a plugin by type

- 18.5. Configuring a plugin task

- 18.6. Plugin convention object

- 18.7. Using the plugin convention object

- 18.8. Explicit application of an implied plugin

- 20.1. Using the Java plugin

- 20.2. Custom Java source layout

- 20.3. Accessing a source set

- 20.4. Configuring the source directories of a source set

- 20.5. Defining a source set

- 20.6. Defining the classpath of a source set

- 20.7. Assembling a JAR for a source set

- 20.8. Generating the Javadoc for a source set

- 20.9. Running tests in a source set

- 20.10. Customization of MANIFEST.MF

- 20.11. Creating a manifest object.

- 20.12. Separate MANIFEST.MF for a particular archive

- 20.13. Separate MANIFEST.MF for a particular archive

- 21.1. Using the Groovy plugin

- 21.2. Custom Groovy source layout

- 21.3. Configuration of Groovy plugin

- 21.4. Configuration of Groovy plugin

- 22.1. Using the Scala plugin

- 22.2. Custom Scala source layout

- 22.3. Declaring the Scala version to use

- 22.4. Enabling the Fast Scala Compiler

- 23.1. Using the War plugin

- 23.2. Customization of war plugin

- 23.3. Generation of JAR archive in addition to WAR archive

- 24.1. Using the Jetty plugin

- 25.1. Using the code quality plugin

- 26.1. Using the Sonar plugin

- 26.2. Configuring connection settings

- 27.1. Using the OSGi plugin

- 27.2. Configuration of OSGi MANIFEST.MF file

- 28.1. Using the Eclipse plugin

- 28.2. Partial Overwrite for Classpath

- 28.3. Partial Overwrite for Project

- 28.4. Export Dependencies

- 28.5. Customizing the XML

- 29.1. Using the IDEA plugin

- 29.2. Partial Overwrite for Module

- 29.3. Partial Overwrite for Project

- 29.4. Export Dependencies

- 29.5. Customizing the XML

- 30.1. Using the Antlr plugin

- 30.2. Declare Antlr version

- 32.1. Using the announce plugin

- 32.2. Configure the announce plugin

- 32.3. Using the announce plugin

- 33.1. Using the application plugin

- 33.2. Configure the application main class

- 34.1. Module dependencies

- 34.2. Artifact only notation

- 34.3. Dependency with classifier

- 34.4. Client module dependencies - transitive dependencies

- 34.5. Project dependencies

- 34.6. File dependencies

- 34.7. Generated file dependencies

- 34.8. Gradle API dependencies

- 34.9. Excluding transitive dependencies

- 34.10. Optional attributes of dependencies

- 34.11. Collections and arrays of dependencies

- 34.12. Dependency configurations

- 34.13. Dependency configurations for project

- 34.14. Configuration.copy

- 34.15. Accessing declared dependencies

- 34.16. Configuration.files

- 34.17. Configuration.files with spec

- 34.18. Configuration.copy

- 34.19. Configuration.copy vs. Configuration.files

- 34.20. Adding central Maven repository

- 34.21. Adding several Maven repositories

- 34.22. Adding custom Maven repository

- 34.23. Adding additional Maven repositories for JAR files

- 34.24. Adding the local Maven cache as a repository

- 34.25. Accessing password protected Maven repository

- 34.26. Flat repository resolver

- 34.27. Definition of a custom repository

- 35.1. Assignment of an artifact to a configuration

- 35.2. Configuration of the upload task

- 36.1. Using the Maven plugin

- 36.2. Creating a stand alone pom.

- 36.3. Upload of file to remote Maven repository

- 36.4. Upload of file via SSH

- 36.5. Customization of pom

- 36.6. Builder style customization of pom

- 36.7. Modifying auto-generated content

- 36.8. Customization of Maven installer

- 36.9. Generation of multiple poms

- 36.10. Accessing a mapping configuration

- 37.1. Single project build

- 37.2. Hierarchical layout

- 37.3. Flat layout

- 37.4. Modification of elements of the project tree

- 37.5. Modification of elements of the project tree

- 37.6. Adding of test task to each project which has certain property set

- 37.7. Notifications

- 37.8. Setting of certain property to all tasks

- 37.9. Logging of start and end of each task execution

- 38.1. Multi-project tree - water & bluewhale projects

- 38.2. Build script of water (parent) project

- 38.3. Multi-project tree - water, bluewhale & krill projects

- 38.4. Water project build script

- 38.5. Defining common behaviour of all projects and subprojects

- 38.6. Defining specific behaviour for particular project

- 38.7. Defining specific behaviour for project krill

- 38.8. Adding custom behaviour to some projects (filtered by project name)

- 38.9. Adding custom behaviour to some projects (filtered by project properties)

- 38.10. Running build from subproject

- 38.11. Evaluation and execution of projects

- 38.12. Evaluation and execution of projects

- 38.13. Running tasks by their absolute path

- 38.14. Dependencies and execution order

- 38.15. Dependencies and execution order

- 38.16. Dependencies and execution order

- 38.17. Declaring dependencies

- 38.18. Declaring dependencies

- 38.19. Project execution dependencies

- 38.20. Cross project task dependencies

- 38.21. Configuration time dependencies

- 38.22. Configuration time dependencies - evaluationDependsOn

- 38.23. Configuration time dependencies

- 38.24. Dependencies - real life example - crossproject configuration

- 38.25. Project lib dependencies

- 38.26. Project lib dependencies

- 38.27. Fine grained control over dependencies

- 38.28. Build and Test Single Project

- 38.29. Partial Build and Test Single Project

- 38.30. Build and Test Depended On Projects

- 38.31. Build and Test Dependent Projects

- 39.1. Defining a custom task

- 39.2. A hello world task

- 39.3. A customizable hello world task

- 39.4. A build for a custom task

- 39.5. A custom task

- 39.6. Using a custom task in another project

- 39.7. Testing a custom task

- 40.1. A custom plugin

- 40.2. A custom plugin convention

- 40.3. A custom plugin with closure convention

- 40.4. A build for a custom plugin

- 40.5. Wiring for a custom plugin

- 40.6. Using a custom plugin in another project

- 40.7. Testing a custom plugin

- 40.8. Managing domain objects

- 41.1. Using inherited properties and methods

- 41.2. Using injected properties and methods

- 41.3. Running another build from a build

- 41.4. Declaring external dependencies for the build script

- 41.5. A build script with external dependencies

- 41.6. Ant optional dependencies

- 42.1. Declaring external dependencies for an init script

- 42.2. An init script with external dependencies

- 43.1. Wrapper task

- 43.2. Wrapper generated files

- 43.3. Configuration of wrapper task

- B.1. Variables scope: local and script wide

- B.2. Distinct configuration and execution phase

We would like to introduce Gradle to you, a build system that we think is a quantum leap for build technology in the Java (JVM) world. Gradle provides:

A very flexible general purpose build tool like Ant.

Switchable, build-by-convention frameworks a la Maven. But we never lock you in!

Very powerful support for multi-project builds.

Very powerful dependency management (based on Apache Ivy).

Full support for your existing Maven or Ivy repository infrastructure.

Support for transitive dependency management without the need for remote repositories or

pom.xmlandivy.xmlfiles.Ant tasks and builds as first class citizens.

Groovy build scripts.

A rich domain model for describing your build.

In Chapter 2, Overview you will find a detailed overview of Gradle. Otherwise, the tutorials are waiting, have fun :)

This user guide, like Gradle itself, is under very active development. Some parts of Gradle aren't documented as completely as they need to be. Some of the content presented won't be entirely clear or will assume that you know more about Gradle than you do. We need your help to improve this user guide. You can find out more about contributing to the documentation at the Gradle web site.

You can find more examples, and some additions to this user guide, on the wiki. You can also contribute your own examples and extra content there.

Here is a list of some of Gradle's features.

- Declarative builds and build-by-convention

At the heart of Gradle lies a rich extensible Domain Specific Language (DSL) based on Groovy. Gradle pushes declarative builds to the next level by providing declarative language elements that you can assemble as you like. Those elements also provide build-by-convention support for Java, Groovy, OSGi, Web and Scala projects. Even more, this declarative language is extensible. Add your own new language elements or enhance the existing ones. Thus providing concise, maintainable and comprehensible builds.

- Language for dependency based programming

The declarative language lies on top of a general purpose task graph, which you can fully leverage in your builds. It provides utmost flexibility to adapt Gradle to your unique needs.

- Structure your build

The suppleness and richness of Gradle finally allows you to apply common design principles to your build. For example, it is very easy to compose your build from reusable pieces of build logic. Inline stuff where unnecessary indirections would be inappropriate. Don't be forced to tear apart what belongs together (e.g. in your project hierarchy). Thus avoiding smells like shotgun changes or divergent change that turn your build into a maintenance nightmare. At last you can create a well structured, easily maintained, comprehensible build.

- Deep API

From being a pleasure to be used embedded to its many hooks over the whole lifecycle of build execution, Gradle allows you to monitor and customize its configuration and execution behavior to its very core.

- Gradle scales

Gradle scales very well. It significantly increases your productivity, from simple single project builds up to huge enterprise multi-project builds. This is true for structuring the build. With the state-of-art incremental build function, this is also true for tackling the performance pain many large enterprise builds suffer from.

- Multi-project builds

Gradle's support for multi-project build is outstanding. Project dependencies are first class citizens. We allow you to model the project relationships in a multi-project build as they really are for your problem domain. Gradle follows your layout not vice versa.

Gradle provides partial builds. If you build a single subproject Gradle takes care of building all the subprojects that subproject depends on. You can also choose to rebuild the subprojects that depend on a particular subproject. Together with incremental builds this is a big time saver for larger builds.

- Many ways to manage your dependencies

Different teams prefer different ways to manage their external dependencies. Gradle provides convenient support for any strategy. From transitive dependency management with remote maven and ivy repositories to jars or dirs on the local file system.

- Gradle is the first build integration tool

Ant tasks are first class citizens. Even more interesting, Ant projects are first class citizens as well. Gradle provides a deep import for any Ant project, turning Ant targets into native Gradle tasks at runtime. You can depend on them from Gradle, you can enhance them from Gradle, you can even declare dependencies on Gradle tasks in your build.xml. The same integration is provided for properties, paths, etc ...

Gradle fully supports your existing Maven or Ivy repository infrastructure for publishing and retrieving dependencies. Gradle also provides a converter for turning a Maven pom.xml into a Gradle script. Runtime imports of Maven projects will come soon.

- Ease of migration

Gradle can adapt to any structure you have. Therefore you can always develop your Gradle build in the same branch where your production build lives and both can evolve in parallel. We usually recommend to write tests that make sure that the produced artifacts are similar. That way migration is as less disruptive and as reliable as possible. This is following the best-practices for refactoring by applying baby steps.

- Groovy

Gradle's build scripts are written in Groovy, not XML. But unlike other approaches this is not for simply exposing the raw scripting power of a dynamic language. That would just lead to a very difficult to maintain build. The whole design of Gradle is oriented towards being used as a language, not as a rigid framework. And Groovy is our glue that allows you to tell your individual story with the abstractions Gradle (or you) provide. Gradle provides some standard stories but they are not privileged in any form. This is for us a major distinguishing features compared to other declarative build systems. Our Groovy support is also not just some simple coating sugar layer. The whole Gradle API is fully groovynized. Only by that using Groovy is the fun and productivity gain it can be.

- The Gradle wrapper

The Gradle Wrapper allows you to execute Gradle builds on machines where Gradle is not installed. This is useful for example for some continuous integration servers. It is also useful for an open source project to keep the barrier low for building it. The wrapper is also very interesting for the enterprise. It is a zero administration approach for the client machines. It also enforces the usage of a particular Gradle version thus minimizing support issues.

- Free and open source

Gradle is an open source project, and is licensed under the ASL.

We think the advantages of an internal DSL (based on a dynamic language) over XML are tremendous in case of build scripts. There are a couple of dynamic languages out there. Why Groovy? The answer lies in the context Gradle is operating in. Although Gradle is a general purpose build tool at its core, its main focus are Java projects. In such projects obviously the team members know Java. We think a build should be as transparent as possible to all team members.

You might argue why not using Java then as the language for build scripts. We think this is a valid question. It would have the highest transparency for your team and the lowest learning curve. But due to limitations of Java such a build language would not be as nice, expressive and powerful as it could be. [1] Languages like Python, Groovy or Ruby do a much better job here. We have chosen Groovy as it offers by far the greatest transparency for Java people. Its base syntax is the same as Java's as well as its type system, its package structure and other things. Groovy builds a lot on top of that. But on a common ground with Java.

For Java teams which share also Python or Ruby knowledge or are happy to learn it, the above arguments don't apply. The Gradle design is well-suited for creating another build script engine in JRuby or Jython. It just doesn't have the highest priority for us at the moment. We happily support any community effort to create additional build script engines.

[1] At http://www.defmacro.org/ramblings/lisp.html you find an interesting article comparing Ant, XML, Java and Lisp. It's funny that the 'if Java had that syntax' syntax in this article is actually the Groovy syntax.

The following tutorials introduce some of the basics of Gradle, to help you get started.

- Chapter 4, Installing Gradle

Describes how to install Gradle.

- Chapter 5, Build Script Basics

Introduces the basic build script elements: projects and tasks.

- Chapter 6, Java Quickstart

Shows how to start using Gradle's build-by-convention support for Java projects.

- Chapter 7, Groovy Quickstart

Using Gradle's build-by-convention support for Groovy projects.

- Chapter 8, Web Application Quickstart

Using Gradle's build-by-convention support for Web applications.

Gradle requires a Java JDK to be installed. Gradle requires a JDK 1.5 or higher. Gradle ships with its own Groovy library, therefore no Groovy needs to be installed. Any existing Groovy installation is ignored by Gradle.

Gradle uses whichever JDK it finds in your path (to check, use java -version).

Alternatively, you can set the JAVA_HOME environment variable to point to the install directory

of the desired JDK.

You can download one of the Gradle distributions from the Gradle web site.

The Gradle distribution comes packaged as a ZIP. The full distribution contains:

The Gradle binaries.

The user guide (HTML and PDF).

The DSL reference guide.

The API documentation (Javadoc and Groovydoc).

Extensive samples, including the examples referenced in the user guide, along with some complete and more complex builds you can use the starting point for your own build.

The binary sources. This is for reference only. If you want to build Gradle you need to download the source distribution or checkout the sources from the source repository. See the Gradle web site for details).

For Un*x users

You need a GNU compatible tool to unzip Gradle, if you want the file permissions to be properly set. We mention this as some zip front ends for Mac OS X don't restore the file permissions properly.

For running Gradle, add GRADLE_HOME/binPATH

environment variable. Usually, this is sufficient to run Gradle.

You run Gradle via the gradle command. To check if Gradle is properly installed just type gradle -v and you should get an output like:

------------------------------------------------------------ Gradle 1.0-milestone-2 ------------------------------------------------------------ Gradle build time: Thursday, 7 April 2011 04:24:33 PM EST Groovy: 1.7.10 Ant: Apache Ant(TM) version 1.8.2 compiled on December 20 2010 Ivy: 2.2.0 JVM: 1.6.0_22 (Sun Microsystems Inc. 17.1-b03) OS: Linux 2.6.35-23-generic amd64

JVM options for running Gradle can be set via environment variables. You can use GRADLE_OPTS

or JAVA_OPTS. Those variables can be used together. JAVA_OPTS is by convention an environment

variable shared by many Java applications. A typical use case would be to set the HTTP proxy in JAVA_OPTS

and the memory options in GRADLE_OPTS. Those variables can also be set at the beginning

of the gradle or gradlew script.

Everything in Gradle sits on top of two basic concepts: projects and tasks.

Every Gradle build is made up of one or more projects. A project represents some component of your software which can be built. What this means exactly depends on what it is that you are building. For example, a project might represent a library JAR or a web application. It might represent a distribution ZIP assembled from the JARs produced by other projects. A project does not necessarily represent a thing to be built. It might represent a thing to be done, such as deploying your application to staging or production environments. Don't worry if this seems a little vague for now. Gradle's build-by-convention support adds a more concrete definition for what a project is.

Each project is made up of one or more tasks. A task represents some atomic piece of work which a build performs. This might be compiling some classes, creating a JAR, generating javadoc, or publishing some archives to a repository.

For now, we will look at defining some simple tasks in a build with one project. Later chapters will look at working with multiple projects and more about working with projects and tasks.

You run a Gradle build using the gradle command. The gradle command

looks for a file called build.gradle in the current directory.

[2]

We call this build.gradle file a build script, although strictly speaking it is

a build configuration script, as we will see later. The build script defines a project and its tasks.

To try this out, create the following build script named build.gradle.

In a command-line shell, enter into the containing directory and execute the build script by running

gradle -q hello:

What does -q do?

Most of the examples in this user guide are run with the -q command-line option.

This suppresses Gradle's log messages, so that only the output of the tasks is shown. This keeps the example

output in this user guide a little clearer. You don't need to use this option if you don't want.

See Chapter 16, Logging for more details about the command-line options which affect Gradle's output.

What's going on here? This build script defines a single task, called hello, and

adds an action to it. When you run gradle hello, Gradle executes the

hello task, which in turn executes the action you've provided. The action is simply a

closure containing some Groovy code to execute.

If you think this looks similar to Ant's targets, well, you are right. Gradle tasks are the equivalent to

Ant targets. But as you will see, they are much more powerful. We have used a different terminology than Ant

as we think the word task is more expressive than the word target.

Unfortunately this introduces a terminology clash with Ant, as Ant calls its commands, such as

javac or copy, tasks. So when we talk about tasks,

we always mean Gradle tasks, which are the equivalent to Ant's targets. If we talk

about Ant tasks (Ant commands), we explicitly say ant task.

There is a shorthand way to define a task like our hello task above, which is more

concise.

Again, this defines a task called hello with a single closure to execute.

We will use this task definition style throughout the user guide.

Gradle's build scripts expose to you the full power of Groovy. As an appetizer, have a look at this:

Example 5.4. Using Groovy in Gradle's tasks

build.gradle

task upper << {

String someString = 'mY_nAmE'

println "Original: " + someString

println "Upper case: " + someString.toUpperCase()

}Output of gradle -q upper

> gradle -q upper Original: mY_nAmE Upper case: MY_NAME

or

Example 5.5. Using Groovy in Gradle's tasks

build.gradle

task count << {

4.times { print "$it " }

}Output of gradle -q count

> gradle -q count 0 1 2 3

As you probably have guessed, you can declare dependencies between your tasks.

Example 5.6. Declaration of dependencies between tasks

build.gradle

task hello << {

println 'Hello world!'

}

task intro(dependsOn: hello) << {

println "I'm Gradle"

}Output of gradle -q intro

> gradle -q intro Hello world! I'm Gradle

To add a dependency, the corresponding task does not need to exist.

Example 5.7. Lazy dependsOn - the other task does not exist (yet)

build.gradle

task taskX(dependsOn: 'taskY') << { println 'taskX' } task taskY << { println 'taskY' }

Output of gradle -q taskX

> gradle -q taskX taskY taskX

The dependency of taskX to taskY is declared before

taskY is defined. This is very important for multi-project builds. Task dependencies are

discussed in more detail in Section 14.4, “Adding dependencies to a task”.

Please notice, that you can't use a shortcut notation (see Section 5.8, “Shortcut notations”) when referring to task, which is not defined yet.

The power of Groovy can be used for more than defining what a task does. For example, you can also use it to dynamically create tasks.

Example 5.8. Dynamic creation of a task

build.gradle

4.times { counter ->

task "task$counter" << {

println "I'm task number $counter"

}

}Output of gradle -q task1

> gradle -q task1 I'm task number 1

Once tasks are created they can be accessed via an API. This is different to Ant. For example you can create additional dependencies.

Example 5.9. Accessing a task via API - adding a dependency

build.gradle

4.times { counter ->

task "task$counter" << {

println "I'm task number $counter"

}

}

task0.dependsOn task2, task3Output of gradle -q task0

> gradle -q task0 I'm task number 2 I'm task number 3 I'm task number 0

Or you can add behavior to an existing task.

Example 5.10. Accessing a task via API - adding behaviour

build.gradle

task hello << {

println 'Hello Earth'

}

hello.doFirst {

println 'Hello Venus'

}

hello.doLast {

println 'Hello Mars'

}

hello << {

println 'Hello Jupiter'

}Output of gradle -q hello

> gradle -q hello Hello Venus Hello Earth Hello Mars Hello Jupiter

The calls doFirst and doLast can be executed multiple times.

They add an action to the beginning or the end of the task's actions list. When the task executes, the

actions in the action list are executed in order. The << operator is simply an

alias for doLast.

As you might have noticed in the previous examples, there is a convenient notation for accessing an existing task. Each task is available as a property of the build script:

Example 5.11. Accessing task as a property of the build script

build.gradle

task hello << {

println 'Hello world!'

}

hello.doLast {

println "Greetings from the $hello.name task."

}Output of gradle -q hello

> gradle -q hello Hello world! Greetings from the hello task.

This enables very readable code, especially when using the out of the box tasks provided by the plugins

(e.g. compile).

You can assign arbitrary new properties to any task.

Example 5.12. Assigning properties to a task

build.gradle

task myTask

myTask.myProperty = 'myCustomPropValue'

task showProps << {

println myTask.myProperty

}Output of gradle -q showProps

> gradle -q showProps myCustomPropValue

Ant tasks are first-class citizens in Gradle. Gradle provides excellent integration for Ant tasks simply

by relying on Groovy. Groovy is shipped with the fantastic AntBuilder. Using Ant tasks

from Gradle is as convenient and more powerful than using Ant tasks from a build.xml

file. From below example you can learn how to execute ant tasks and how to access ant properties:

Example 5.13. Using AntBuilder to execute ant.loadfile target

build.gradle

task loadfile << {

def files = file('../antLoadfileResources').listFiles().sort()

files.each { File file ->

if (file.isFile()) {

ant.loadfile(srcFile: file, property: file.name)

println " *** $file.name ***"

println "${ant.properties[file.name]}"

}

}

}Output of gradle -q loadfile

> gradle -q loadfile *** agile.manifesto.txt *** Individuals and interactions over processes and tools Working software over comprehensive documentation Customer collaboration over contract negotiation Responding to change over following a plan *** gradle.manifesto.txt *** Make the impossible possible, make the possible easy and make the easy elegant. (inspired by Moshe Feldenkrais)

There is lots more you can do with Ant in your build scripts. You can find out more in Chapter 17, Using Ant from Gradle.

Gradle scales in how you can organize your build logic. The first level of organizing your build logic for the example above, is extracting a method.

Example 5.14. Using methods to organize your build logic

build.gradle

task checksum << {

fileList('../antLoadfileResources').each {File file ->

ant.checksum(file: file, property: "cs_$file.name")

println "$file.name Checksum: ${ant.properties["cs_$file.name"]}"

}

}

task loadfile << {

fileList('../antLoadfileResources').each {File file ->

ant.loadfile(srcFile: file, property: file.name)

println "I'm fond of $file.name"

}

}

File[] fileList(String dir) {

file(dir).listFiles({file -> file.isFile() } as FileFilter).sort()

}Output of gradle -q loadfile

> gradle -q loadfile I'm fond of agile.manifesto.txt I'm fond of gradle.manifesto.txt

Later you will see that such methods can be shared among subprojects in multi-project builds. If your build logic becomes more complex, Gradle offers you other very convenient ways to organize it. We have devoted a whole chapter to this. See Chapter 41, Organizing Build Logic.

Gradle allows you to define one or more default tasks for your build.

Example 5.15. Defining a default tasks

build.gradle

defaultTasks 'clean', 'run' task clean << { println 'Default Cleaning!' } task run << { println 'Default Running!' } task other << { println "I'm not a default task!" }

Output of gradle -q

> gradle -q Default Cleaning! Default Running!

This is equivalent to running gradle clean run. In a multi-project build every

subproject can have its own specific default tasks. If a subproject does not specify default tasks, the

default tasks of the parent project are used (if defined).

As we describe in full detail later (See Chapter 37, The Build Lifecycle) Gradle has a configuration phase and an execution phase. After the configuration phase Gradle knows all tasks that should be executed. Gradle offers you a hook to make use of this information. A use-case for this would be to check if the release task is part of the tasks to be executed. Depending on this you can assign different values to some variables.

In the following example, execution of distribution and release tasks results in different value of version variable.

Example 5.16. Different outcomes of build depending on chosen tasks

build.gradle

gradle.taskGraph.whenReady {taskGraph ->

if (taskGraph.hasTask(':release')) {

version = '1.0'

} else {

version = '1.0-SNAPSHOT'

}

}

task distribution << {

println "We build the zip with version=$version"

}

task release(dependsOn: 'distribution') << {

println 'We release now'

}Output of gradle -q distribution

> gradle -q distribution We build the zip with version=1.0-SNAPSHOT

Output of gradle -q release

> gradle -q release We build the zip with version=1.0 We release now

The important thing is, that the fact that the release task has been chosen, has an effect before the release task gets executed. Nor has the release task to be the primary task (i.e. the task passed to the gradle command).

This is not the end of the story for tasks. So far we have worked with simple tasks. Tasks will be revisited in Chapter 14, More about Tasks and when we look at the Java plugin in Chapter 20, The Java Plugin.

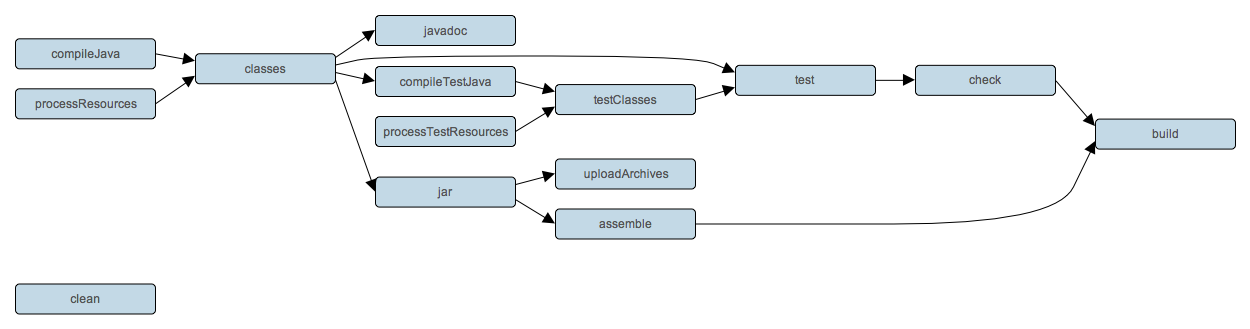

As we have seen, Gradle is a general-purpose build tool. It can build pretty much anything you care to implement in your build script. Out-of-the-box, however, it doesn't build anything unless you add code to your build script to do so.

Most Java projects are pretty similar as far as the basics go: you need to compile your Java source files, run some unit tests, and create a JAR file containing your classes. It would be nice if you didn't have to code all this up for every project. Luckily, you don't have to. Gradle solves this problem through the use of plugins. A plugin is an extension to Gradle which configures your project in some way, typically by adding some pre-configured tasks which together do something useful. Gradle ships with a number of plugins, and you can easily write your own and share them with others. One such plugin is the Java plugin. This plugin adds some tasks to your project which will compile and unit test your Java source code, and bundle it into a JAR file.

The Java plugin is convention based. This means that the plugin defines default values for many aspects of the project, such as where the Java source files are located. If you follow the convention in your project, you generally don't need to do much in your build script to get a useful build. Gradle allows you to customize your project if you don't want to or cannot follow the convention in some way. In fact, because support for Java projects is implemented as a plugin, you don't have to use the plugin at all to build a Java project, if you don't want to.

We have in-depth coverage with many examples about the Java plugin, dependency management and multi-project builds in later chapters. In this chapter we want to give you an initial idea of how to use the Java plugin to build a Java project.

Let's look at a simple example. To use the Java plugin, add the following to your build file:

Example 6.1. Using the Java plugin

build.gradle

apply plugin: 'java'Note: The code for this example can be found at samples/java/quickstart which is in both the binary and source distributions of Gradle.

This is all you need to define a Java project. This will apply the Java plugin to your project, which adds a number of tasks to your project.

What tasks are available?

You can use gradle tasks to list the tasks of a project. This will let you see

the tasks that the Java plugin has added to your project.

Gradle expects to find your production source code under src/main/java and your test

source code under src/test/java. In addition, any files under

src/main/resources will be included in the JAR file as resources, and any files under

src/test/resources will be included in the classpath used to run the tests. All output

files are created under the build directory, with the JAR file ending up in the

build/libs directory.

The Java plugin adds quite a few tasks to your project. However, there are only a handful of tasks

that you will need to use to build the project. The most commonly used task is the build task,

which does a full build of the project. When you run gradle build, Gradle will

compile and test your code, and create a JAR file containing your main classes and resources:

Example 6.2. Building a Java project

Output of gradle build

> gradle build :compileJava :processResources :classes :jar :assemble :compileTestJava :processTestResources :testClasses :test :check :build BUILD SUCCESSFUL Total time: 1 secs

Some other useful tasks are:

- clean

Deletes the

builddirectory, removing all built files.- assemble

Compiles and jars your code, but does not run the unit tests. Other plugins add more artifacts to this task. For example, if you use the War plugin, this task will also build the WAR file for your project.

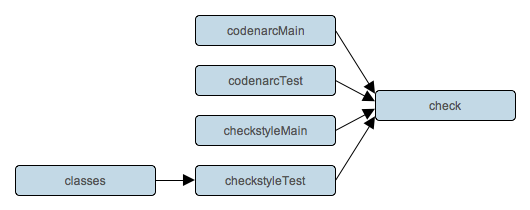

- check

Compiles and tests your code. Other plugins add more checks to this task. For example, if you use the Code-quality plugin, this task will also run Checkstyle against your source code.

Usually, a Java project will have some dependencies on external JAR files. To reference these JAR files in the project, you need to tell Gradle where to find them. In Gradle, artifacts such as JAR files, are located in a repository. A repository can be used for fetching the dependencies of a project, or for publishing the artifacts of a project, or both. For this example, we will use the public Maven repository:

Let's add some dependencies. Here, we will declare that our production classes have a compile-time dependency on commons collections, and that our test classes have a compile-time dependency on junit:

Example 6.4. Adding dependencies

build.gradle

dependencies {

compile group: 'commons-collections', name: 'commons-collections', version: '3.2'

testCompile group: 'junit', name: 'junit', version: '4.+'

}You can find out more in Chapter 34, Dependency Management.

The Java plugin adds a number of properties to your project. These properties have default values which are usually sufficient to get started. It's easy to change these values if they don't suit. Let's look at this for our sample. Here we will specify the version number for our Java project, along with the Java version our source is written in. We also add some attributes to the JAR manifest.

Example 6.5. Customization of MANIFEST.MF

build.gradle

sourceCompatibility = 1.5 version = '1.0' jar { manifest { attributes 'Implementation-Title': 'Gradle Quickstart', 'Implementation-Version': version } }

What properties are available?

You can use gradle properties to list the properties of a project. This will allow

you to see the properties added by the Java plugin, and their default values.

The tasks which the Java plugin adds are regular tasks, exactly the same as if they were declared in

the build file. This means you can use any of the mechanisms shown in earlier chapters to customise

these tasks. For example, you can set the properties of a task, add behaviour to a task, change the

dependencies of a task, or replace a task entirely. In our sample, we will configure the

test task, which is of type Test, to

add a system property when the tests are executed:

Usually the JAR file needs to be published somewhere. To do this, you need to tell Gradle where to publish the JAR file. In Gradle, artifacts such as JAR files are published to repositories. In our sample, we will publish to a local directory. You can also publish to a remote location, or multiple locations.

Example 6.7. Publishing the JAR file

build.gradle

uploadArchives {

repositories {

flatDir(dirs: file('repos'))

}

}To publish the JAR file, run gradle uploadArchives.

To import your project into Eclipse, you need to add another plugin to your build file:

Now execute gradle eclipse command to generate Eclipse project files. More on Eclipse

task can be found in Chapter 28, The Eclipse Plugin.

Here's the complete build file for our sample:

Example 6.9. Java example - complete build file

build.gradle

apply plugin: 'java' apply plugin: 'eclipse' sourceCompatibility = 1.5 version = '1.0' jar { manifest { attributes 'Implementation-Title': 'Gradle Quickstart', 'Implementation-Version': version } } repositories { mavenCentral() } dependencies { compile group: 'commons-collections', name: 'commons-collections', version: '3.2' testCompile group: 'junit', name: 'junit', version: '4.+' } test { systemProperties 'property': 'value' } uploadArchives { repositories { flatDir(dirs: file('repos')) } }

Now let's look at a typical multi-project build. Below is the layout for the project:

Example 6.10. Multi-project build - hierarchical layout

Build layout

multiproject/ api/ services/webservice/ shared/

Note: The code for this example can be found at samples/java/multiproject which is in both the binary and source distributions of Gradle.

Here we have three projects. Project api produces a JAR file which is shipped to the

client to provide them a Java client for your XML webservice. Project webservice is a

webapp which returns XML. Project shared contains code used both by api

and webservice.

To define a multi-project build, you need to create a settings file. The settings

file lives in the root directory of the source tree, and specifies which projects to include in the

build. It must be called settings.gradle. For this example, we are using a simple

hierarchical layout. Here is the corresponding settings file:

Example 6.11. Multi-project build - settings.gradle file

settings.gradle

include "shared", "api", "services:webservice", "services:shared"

You can find out more about the settings file in Chapter 38, Multi-project Builds.

For most multi-project builds, there is some configuration which is common to all projects.

In our sample, we will define this common configuration in the root project, using a technique called

configuration injection. Here, the root project is like a container and the

subprojects method iterates over the elements of this container - the projects in

this instance - and injects the specified configuration. This way we can easily define the manifest

content for all archives, and some common dependencies:

Example 6.12. Multi-project build - common configuration

build.gradle

subprojects {

apply plugin: 'java'

apply plugin: 'eclipse'

repositories {

mavenCentral()

}

dependencies {

testCompile 'junit:junit:4.8.2'

}

version = '1.0'

jar {

manifest.attributes provider: 'gradle'

}

}Notice that our sample applies the Java plugin to each subproject. This means the tasks and

configuration properties we have seen in the previous section are available in each subproject.

So, you can compile, test, and JAR all the projects by running gradle build from

the root project directory.

You can add dependencies between projects in the same build, so that, for example, the JAR file of one

project is used to compile another project. In the api build file we will add a dependency

on the JAR produced by the shared project. Due to this dependency, Gradle will

ensure that project shared always gets built before project api.

Example 6.13. Multi-project build - dependencies between projects

api/build.gradle

dependencies {

compile project(':shared')

}See Section 38.7.1, “Disabling the build of dependency projects” for how to disable this functionality.

In this chapter, you have seen how to do some of the things you commonly need to build a Java based project. This chapter is not exhaustive, and there are many other things you can do with Java projects in Gradle. These are dealt with in later chapters. Also, a lot of the behaviour you have seen in this chapter is configurable. For example, you can change where Gradle looks Java source files, or add extra tasks, or you can change what any task actually does. Again, you will see how this works in later chapters.

You can find out more about the Java plugin in Chapter 20, The Java Plugin, and you can find more sample

Java projects in the samples/java directory in the Gradle distribution.

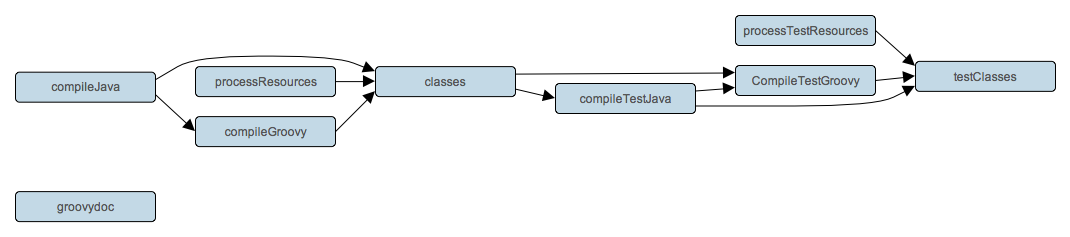

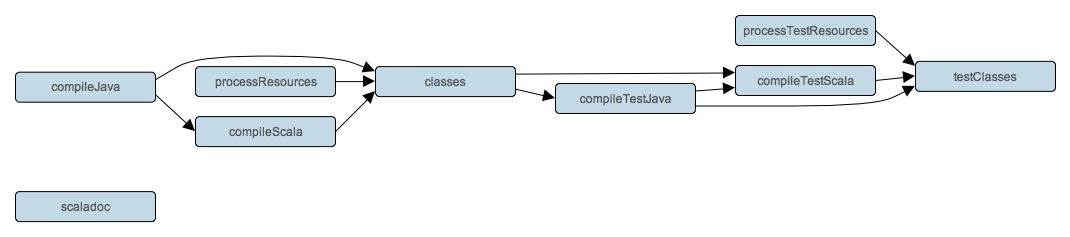

To build a Groovy project, you use the Groovy plugin. This plugin extends the Java plugin to add Groovy compilation capabilties to your project. Your project can contain Groovy source code, Java source code, or a mix of the two. In every other respect, a Groovy project is identical to a Java project, which we have already seen in Chapter 6, Java Quickstart.

Let's look at an example. To use the Groovy plugin, add the following to your build file:

Example 7.1. Groovy plugin

build.gradle

apply plugin: 'groovy'Note: The code for this example can be found at samples/groovy/quickstart which is in both the binary and source distributions of Gradle.

This will also apply the Java plugin to the project, if it has not already been applied. The Groovy plugin

extends the compile task to look for source files in directory

src/main/groovy, and the compileTest task to look for test source

files in directorysrc/test/groovy. The compile tasks use joint compilation for these

directories, which means they can contain a mixture of java and groovy source files.

To use the groovy compilation tasks, you must also declare the Groovy version to use and where to find the

Groovy libraries. You do this by adding a dependency to the groovy configuration.

The compile configuration inherits this dependency, so the groovy libraries will

be included in classpath when compiling Groovy and Java source. For our sample, we will use Groovy 1.6.0

from the public Maven repository:

Example 7.2. Dependency on Groovy 1.6.0

build.gradle

repositories {

mavenCentral()

}

dependencies {

groovy group: 'org.codehaus.groovy', name: 'groovy', version: '1.7.10'

}Here is our complete build file:

Example 7.3. Groovy example - complete build file

build.gradle

apply plugin: 'eclipse' apply plugin: 'groovy' repositories { mavenCentral() } dependencies { groovy group: 'org.codehaus.groovy', name: 'groovy', version: '1.7.10' testCompile group: 'junit', name: 'junit', version: '4.8.2' }

Running gradle build will compile, test and JAR your project.

This chapter describes a very simple Groovy project. Usually, a real project will require more than this. Because a Groovy project is a Java project, whatever you can do with a Java project, you can also do with a Groovy project.

You can find out more about the Groovy plugin in Chapter 21, The Groovy Plugin, and you can find more

sample Groovy projects in the samples/groovy directory in the Gradle distribution.

This chapter is a work in progress.

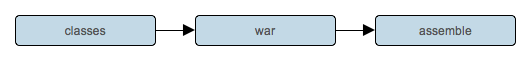

This chapter introduces some of the Gradle's support for web applications. Gradle provides two plugins for web application developement: the War plugin and the Jetty plugin. The War plugin extends the Java plugin to build a WAR file for your project. The Jetty plugin extends the War plugin to allow you to deploy your web application to an embedded Jetty web container.

To build a WAR file, you apply the War plugin to your project:

Example 8.1. War plugin

build.gradle

apply plugin: 'war'Note: The code for this example can be found at samples/webApplication/quickstart which is in both the binary and source distributions of Gradle.

This also applies the Java plugin to your project. Running gradle build will compile,

test and WAR your project. Gradle will look for the source files to include in the WAR file in

src/main/webapp. Your compiled classes, and their runtime dependencies are also

included in the WAR file.

Groovy web applications

You can combine multiple plugins in a single project, so you can use the War and Groovy plugins together to build a Groovy based web application. The appropriate groovy libraries will be added to the WAR file for you.

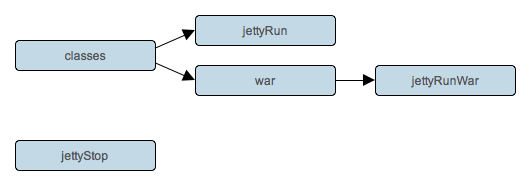

To run your web application, you apply the Jetty plugin to your project:

This also applies the War plugin to your project. Running gradle jettyRun will

run your web application in an embedded Jetty web container. Running gradle jettyRunWar

will build the WAR file, and then run it in an embedded web container.

TODO: which url, configure port, uses source files in place and can edit your files and reload.

You can find out more about the War plugin in Chapter 23, The War Plugin and the Jetty plugin in

Chapter 24, The Jetty Plugin. You can find more sample Java projects in the

samples/webApplication directory in the Gradle distribution.

This chapter is currently under construction.

For all the details of artifact handling see Chapter 35, Artifact Management.

This chapter introduces some of the basics of artifact handling in Gradle.

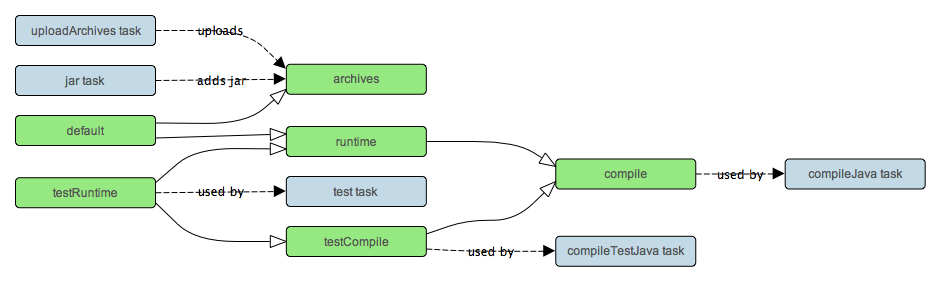

Artifacts are grouped into configurations. A configuration is simply a set of files with a name. You can use them to declare the external dependencies your project has, or to declare the artifacts which your project publishes.

To define a configuration:

To access a configuration:

Example 9.2. Accessing a configuration

build.gradle

println configurations.compile.name

println configurations['compile'].nameTo configure a configuration:

Example 9.3. Configuration of a configuration

build.gradle

configurations {

compile {

description = 'compile classpath'

transitive = true

}

runtime {

extendsFrom compile

}

}

configurations.compile {

description = 'compile classpath'

}Artifacts are stored in repositories.

To use maven central repository:

To use a local directory:

Example 9.5. Usage of a local directory

build.gradle

repositories {

flatDir name: 'localRepository', dirs: 'lib'

}You can also use any Ivy resolver. You can have multiple repositories.

To access a repository:

Example 9.6. Accessing a repository

build.gradle

println repositories.localRepository.name

println repositories['localRepository'].nameTo configure a repository:

Example 9.7. Configuration of a repository

build.gradle

repositories {

localRepository {

addArtifactPattern(file('lib').absolutePath + '/[name]/[revision]/[name]-[revision].[ext]')

}

}

repositories.localRepository {

addArtifactPattern(file('lib').absolutePath + '/[name]/[revision]/[name]-[revision].[ext]')

}To define an external dependency, you add a dependency to a configuration:

Example 9.8. Definition of an external dependency

build.gradle

configurations {

compile

}

dependencies {

compile group: 'commons-collections', name: 'commons-collections', version: '3.2'

}group and version are optional

TBD - configuring an external dependency

To use the external dependencies of a configuration:

Example 9.9. Usage of external dependency of a configuration

build.gradle

task listJars << {

configurations.compile.each { File file -> println file.name }

}Output of gradle -q listJars

> gradle -q listJars commons-collections-3.2.jar

Configurations are contained in a ConfigurationContainer.

Each configuration implements the Configuration.

This chapter introduces the basics of the Gradle command-line. You run a build using the gradle command, which you have already seen in action in previous chapters.

You can execute multiple tasks in a single build by listing each of the tasks on the command-line. For example,

the command gradle compile test will execute the compile and

test tasks. Gradle will execute the tasks in the order that they are listed on the

command-line, and will also execute the dependencies for each task. Each task is executed once only,

regardless of how it came to be included in the build: whether it was specified on the command-line, or it a

dependency of another task, or both. Let's look at an example.

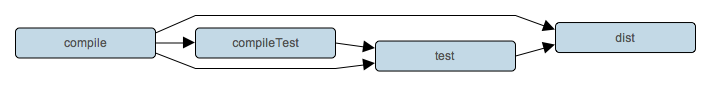

Below four tasks are defined. Both dist and test depend on the

compile task. Running gradle dist test for this build script

results in the compile task being executed only once.

Example 10.1. Executing multiple tasks

build.gradle

task compile << {

println 'compiling source'

}

task compileTest(dependsOn: compile) << {

println 'compiling unit tests'

}

task test(dependsOn: [compile, compileTest]) << {

println 'running unit tests'

}

task dist(dependsOn: [compile, test]) << {

println 'building the distribution'

}Output of gradle dist test

> gradle dist test :compile compiling source :compileTest compiling unit tests :test running unit tests :dist building the distribution BUILD SUCCESSFUL Total time: 1 secs

Because each task is executed once only, executing gradle test test is exactly the same

as executing gradle test.

You can exclude a task from being executed using the -x command-line option and providing

the name of the task to exclude. Let's try this with the sample build file above.

Example 10.2. Excluding tasks

Output of gradle dist -x test

> gradle dist -x test :compile compiling source :dist building the distribution BUILD SUCCESSFUL Total time: 1 secs

You can see from the output of this example, that the test task is not executed, even

though it is a dependency of the dist task. You will also notice that the

test task's dependencies, such as compileTest

are not executed either. Those dependencies of test that are required by another task, such as

compile, are still executed.

When you specify tasks on the command-line, you don't have to provide the full name of the task. You only need to provide enough of the

task name to uniquely identify the task. For example, in the sample build above, you can execute task

dist by running gradle d:

Example 10.3. Abbreviated task name

Output of gradle di

> gradle di :compile compiling source :compileTest compiling unit tests :test running unit tests :dist building the distribution BUILD SUCCESSFUL Total time: 1 secs

You can also abbreviate each word in a camel case task name. For example, you can execute task compileTest

by running gradle compTest or even gradle cT

Example 10.4. Abbreviated camel case task name

Output of gradle cT

> gradle cT :compile compiling source :compileTest compiling unit tests BUILD SUCCESSFUL Total time: 1 secs

You can also use these abbreviations with the -x command-line option.

When you run the gradle command, it looks for a build file in the current directory.

You can use the -b option to select another build file. For example:

Example 10.5. Selecting the project using a build file

subdir/myproject.gradle

task hello << {

println "using build file '$buildFile.name' in '$buildFile.parentFile.name'."

}Output of gradle -q -b subdir/myproject.gradle hello

> gradle -q -b subdir/myproject.gradle hello using build file 'myproject.gradle' in 'subdir'.

Alternatively, you can use the -p option to specify the project directory to use:

Example 10.6. Selecting the project using project directory

Output of gradle -q -p subdir hello

> gradle -q -p subdir hello using build file 'build.gradle' in 'subdir'.

Gradle provides several built-in tasks which show particular details of your build. This can be useful for understanding the structure and dependencies of your build, and for debugging problems.

In addition to the built-in tasks shown below, you can also use the project report plugin to add tasks to your project which will generate these reports.

Running gradle projects gives you a list of the sub-projects of the selected project,

displayed in a hierarchy. Here is an example:

Example 10.7. Obtaining information about projects

Output of gradle -q projects

> gradle -q projects ------------------------------------------------------------ Root Project ------------------------------------------------------------ Root project 'projectReports' +--- Project ':api' - The shared API for the application \--- Project ':webapp' - The Web application implementation To see a list of the tasks of a project, run gradle <project-path>:tasks For example, try running gradle :api:tasks

The report shows the description of each project, if specified. You can provide a description for a project

by setting the description property:

Example 10.8. Providing a description for a project

build.gradle

description = 'The shared API for the application'Running gradle tasks gives you a list of the main tasks of the

selected project. This report shows the default tasks for the project, if any, and a description for

each task. Below is an example of this report:

Example 10.9. Obtaining information about tasks

Output of gradle -q tasks

> gradle -q tasks ------------------------------------------------------------ Root Project ------------------------------------------------------------ Default tasks: dists Build tasks ----------- clean - Deletes the build directory (build) dists - Builds the distribution libs - Builds the JAR Help tasks ---------- dependencies - Displays the dependencies of root project 'projectReports'. help - Displays a help message projects - Displays the sub-projects of root project 'projectReports'. properties - Displays the properties of root project 'projectReports'. tasks - Displays the tasks in root project 'projectReports'. To see all tasks and more detail, run with --all.

By default, this report shows only those tasks which have been assigned to a task group. You can do this

by setting the group property for the task. You can also set the description

property, to provide a description to be included in the report.

Example 10.10. Changing the content of the task report

build.gradle

dists {

description = 'Builds the distribution'

group = 'build'

}You can obtain more information in the task listing using the --all option. With

this option, the task report lists all tasks in the project, grouped by main task, and the dependencies

for each task. Here is an example:

Example 10.11. Obtaining more information about tasks

Output of gradle -q tasks --all

> gradle -q tasks --all

------------------------------------------------------------

Root Project

------------------------------------------------------------

Default tasks: dists

Build tasks

-----------

clean - Deletes the build directory (build)

api:clean - Deletes the build directory (build)

webapp:clean - Deletes the build directory (build)

dists - Builds the distribution [api:libs, webapp:libs]

docs - Builds the documentation

api:libs - Builds the JAR

api:compile - Compiles the source files

webapp:libs - Builds the JAR [api:libs]

webapp:compile - Compiles the source files

Help tasks

----------

dependencies - Displays the dependencies of root project 'projectReports'.

help - Displays a help message

projects - Displays the sub-projects of root project 'projectReports'.

properties - Displays the properties of root project 'projectReports'.

tasks - Displays the tasks in root project 'projectReports'.Running gradle dependencies

gives you a list of the dependencies of the selected project, broken down by configuration. For each

configuration, the direct and transitive dependencies of that configuration are shown in a tree. Below

is an example of this report:

Example 10.12. Obtaining information about dependencies

Output of gradle -q dependencies api:dependencies webapp:dependencies

> gradle -q dependencies api:dependencies webapp:dependencies ------------------------------------------------------------ Root Project ------------------------------------------------------------ No configurations ------------------------------------------------------------ Project :api - The shared API for the application ------------------------------------------------------------ compile \--- org.codehaus.groovy:groovy-all:1.7.10 [default] ------------------------------------------------------------ Project :webapp - The Web application implementation ------------------------------------------------------------ compile +--- projectReports:api:1.0-SNAPSHOT [compile] | \--- org.codehaus.groovy:groovy-all:1.7.10 [default] \--- commons-io:commons-io:1.2 [default]

Running gradle properties gives you a list of the properties of the selected

project. This is a snippet from the output:

Example 10.13. Information about properties

Output of gradle -q api:properties

> gradle -q api:properties

------------------------------------------------------------

Project :api - The shared API for the application

------------------------------------------------------------

additionalProperties: {}

all: [task ':api:clean', task ':api:compile', task ':api:libs']

allprojects: [project ':api']

ant: org.gradle.api.internal.project.DefaultAntBuilder@12345

antBuilderFactory: org.gradle.api.internal.project.DefaultAntBuilderFactory@12345

artifacts: org.gradle.api.internal.artifacts.dsl.DefaultArtifactHandler@12345

asDynamicObject: org.gradle.api.internal.DynamicObjectHelper@12345

asMap: {clean=task ':api:clean', compile=task ':api:compile', libs=task ':api:libs'}

buildDir: /home/user/gradle/samples/userguide/tutorial/projectReports/api/build

buildDirName: build

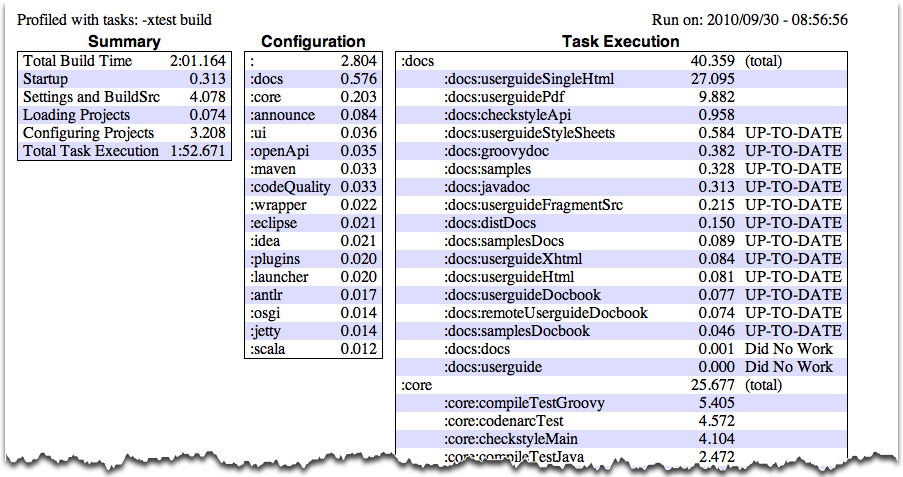

buildFile: /home/user/gradle/samples/userguide/tutorial/projectReports/api/build.gradleThe --profile command line option will record some useful timing information

while your build is running and write a report to the build/reports/profile directory.

The report will be named using the time when the build was run.

This report lists summary times and details for both the configuration phase and task execution. The times for configuration and task execution are sorted with the most expensive operations first. The task execution results also indicate if any tasks were skipped (and the reason) or if tasks that were not skipped did no work.

Builds which utilize a buildSrc directory will generate a second profile report for buildSrc in the

buildSrc/build directory.

|

Sometimes you are interested in which tasks are executed in which order for a given set of tasks specified on the

command line, but you don't want the tasks to be executed. You can use the -m option for this.

For example gradle -m clean compile shows you all tasks to be executed as

part of the clean and compile tasks.

This is complementary to the tasks task, which shows you the tasks which are available for

execution.

In this chapter, you have seen some of the things you can do with Gradle from the command-line. You can find out more about the gradle command in Appendix C, Gradle Command Line.

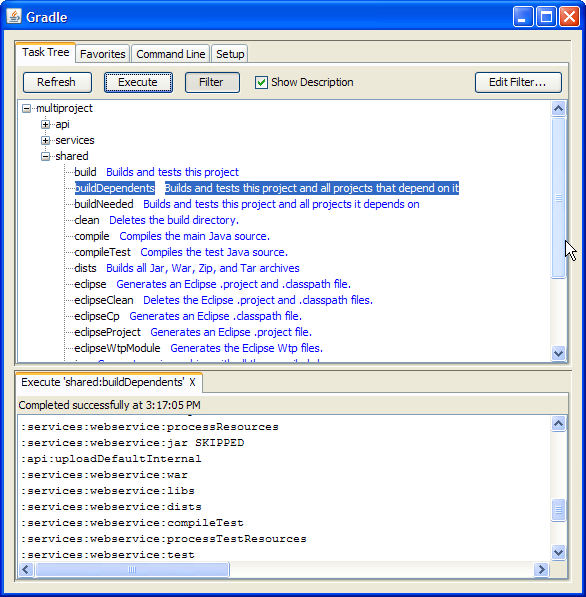

In addition to supporting a traditional command line interface, gradle offers a graphical user interface. This is a stand alone user interface that can be launched with the --gui option.

Note that this command blocks until the gradle GUI is closed. Under *nix it is probably preferable to run this as a background task (gradle --gui&)

If you run this from your gradle project working directory, you should see a tree of tasks.

It is preferable to run this command from your gradle project directory so that the settings of the UI will be stored in your project directory. However, you can run it then change the working directory via the Setup tab in the UI.

The UI displays 4 tabs along the top and an output window along the bottom.

The Task Tree shows a hierarchical display of all projects and their tasks. Double clicking a task executes it.

There is also a filter so that uncommon tasks can be hidden. You can toggle the filter via the Filter button. Editing the filter allows you to configure which tasks and projects are shown. Hidden tasks show up in red. Note: newly created tasks will show up by default (versus being hidden by default).

The Task Tree context menu provides the following options:

Execute ignoring dependencies. This does not require dependent projects to be rebuilt (same as the -a option).

Add tasks to the favorites (see Favorites tab)

Hide the selected tasks. This adds them to the filter.

Edit the build.gradle file. Note: this requires Java 1.6 or higher and requires that you have .gradle files associated in your OS.

The Favorites tab is place to store commonly-executed commands. These can be complex commands (anything that's legal to gradle) and you can provide them with a display name. This is useful for creating, say, a custom build command that explicitly skips tests, documentation, and samples that you could call "fast build".

You can reorder favorites to your liking and even export them to disk so they can imported by others. If you edit them, you are given options to "Always Show Live Output." This only applies if you have 'Only Show Output When Errors Occur'. This override always forces the output to be shown.

The Command Line tab is place to execute a single gradle command directly. Just enter whatever you would normally enter after 'gradle' on the command line. This also provides a place to try out commands before adding them to favorites.

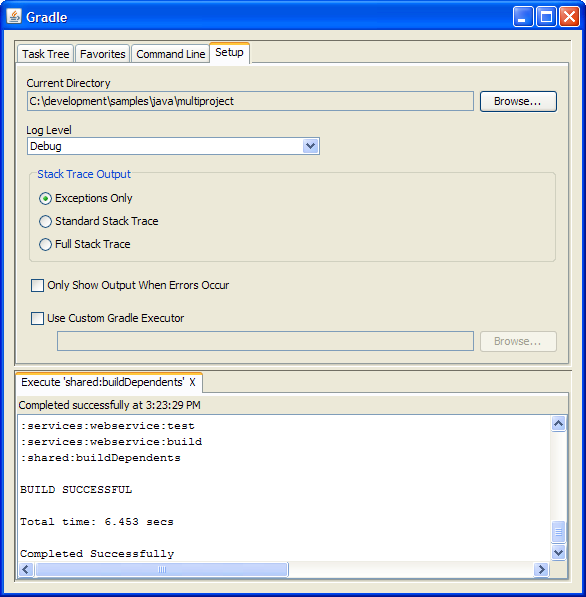

The Setup tab allows configuration of some general settings.

Current Directory

Defines the root directory of your gradle project (typically where build.gradle is located).

Stack Trace Output

This determines how much information to write out stack traces when errors occur. Note: if you specify a stack trace level on either the Command Line or Favorites tab, it will override this stack trace level.

Only Show Output When Errors Occur

Enabling this option hides any output when a task is executed unless the build fails.

Use Custom Gradle Executor - Advanced feature

This provides you with an alternate way to launch gradle commands. This is useful if your project requires some extra setup that is done inside another batch file or shell script (such as specifying an init script).

There is a common situation, that multiple tasks depend on the existence of a directory. Of course you can

deal with this by adding a

mkdir

to the beginning of those tasks. But this is kind of bloated. There is a better solution (works only if the

tasks that need the directory have a

dependsOn

relationship):

Example 12.1. Directory creation with mkdir

build.gradle

classesDir = new File('build/classes') task resources << { classesDir.mkdirs() // do something } task compile(dependsOn: 'resources') << { if (classesDir.isDirectory()) { println 'The class directory exists. I can operate' } // do something }

Output of gradle -q compile

> gradle -q compile The class directory exists. I can operate

But Gradle offers you also Directory Tasks to deal with this.

Example 12.2. Directory creation with Directory tasks

build.gradle

classes = dir('build/classes') task resources(dependsOn: classes) << { // do something } task otherResources(dependsOn: classes) << { if (classes.dir.isDirectory()) { println 'The class directory exists. I can operate' } // do something }

Output of gradle -q otherResources

> gradle -q otherResources The class directory exists. I can operate

A Directory Task is a simple task whose name is a relative path to the project dir [3] . During the execution phase the directory corresponding to this path gets created if it does not exist yet. Another interesting thing to note in this example, is that you can also pass tasks objects to the dependsOn declaration of a task.

Gradle offers a variety of ways to add properties to your build. With the -D command line

option you can pass a system property to the JVM which runs Gradle. The -D option of the

gradle command has the same effect as the -D option of the

java command.

You can also directly add properties to your project objects using properties files. You can place a

gradle.properties file in the Gradle user home directory (defaults to

USER_HOME/.gradlegradle.properties files in any subproject directory.

The properties of the gradle.properties can be accessed via the project object. The

properties file in the user's home directory has precedence over property files in the project directories.

You can also add properties directly to your project object via the -P

command line option. For more exotic use cases you can even pass properties directly

to the project object via system and environment properties. For example if you run a build on a continuous

integration server where you have no admin rights for the machine. Your build script

needs properties which values should not be seen by others. Therefore you can't use the -P

option. In this case you can add an environment property in the project administration section (invisible to

normal users).

[4]

If the environment property follows the pattern

ORG_GRADLE_PROJECT_,

propertyName=somevaluepropertyName is added to your project object. If in the future CI servers support Gradle

directly, they might start Gradle via its main method. Therefore we already support the same mechanism for

system properties. The only difference is the pattern, which is

org.gradle.project..

propertyName

With the gradle.properties files you can also set system properties. If a property

in such a file has the prefix systemProp. the property and its value are added to the

system properties, without the prefix.

Example 12.3. Setting properties with a gradle.properties file

gradle.properties

gradlePropertiesProp=gradlePropertiesValue systemPropertiesProp=shouldBeOverWrittenBySystemProp envPropertiesProp=shouldBeOverWrittenByEnvProp systemProp.system=systemValue

build.gradle

task printProps << {

println commandLineProjectProp

println gradlePropertiesProp

println systemProjectProp

println envProjectProp

println System.properties['system']

}Output of gradle -q -PcommandLineProjectProp=commandLineProjectPropValue -Dorg.gradle.project.systemProjectProp=systemPropertyValue printProps

> gradle -q -PcommandLineProjectProp=commandLineProjectPropValue -Dorg.gradle.project.systemProjectProp=systemPropertyValue printProps commandLineProjectPropValue gradlePropertiesValue systemPropertyValue envPropertyValue systemValue

You can access a project property in your build script simply by using its name as you would use a

variable. In case this property does not exists, an exception is thrown and the build fails. If your

build script relies on optional properties the user might set for example in a gradle.properties file,

you need to check for existence before you can access them. You can do this by using the method